2024

Stable Diffusion for Remote Sensing: Reality Check

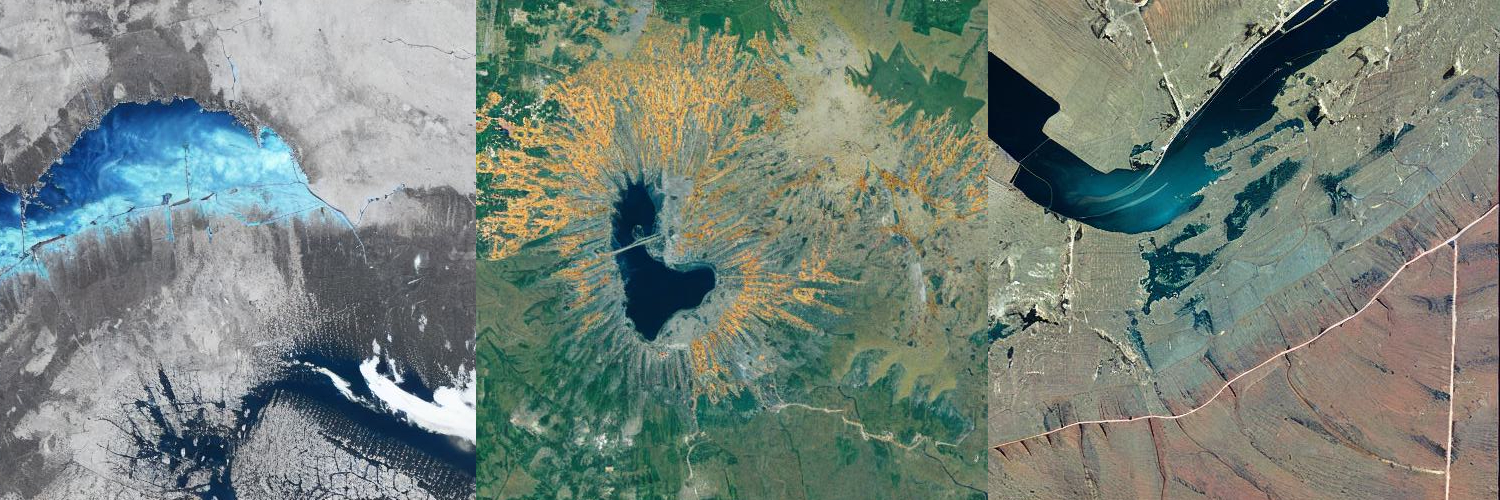

Generative techniques like Stable Diffusion have been out for a while and are starting to make their way into the field of remote sensing. I thought I’d give these models a try and see what the hype was all about. From my very brief experiments, I can say two things: one, I’m not entirely sold on the utility of generative models for real world applications; two, these models make interesting and abstract art that resembles remote sensing imagery. Until these models and their supporting ecosystem develop further to allow practical application development, I’ll simply spend my time toying with these models to generate interesting art pieces.

2023

Hassle Free, Cloud Free

Given enough data and compute budget, there’s a sure-fire way to remove all cloud cover from Sentinel-2 satellite imagery. By enough data, I mean a whopping 5 years worth of imagery. It’s fairly overkill, to put it mildly, but it works: every time, everywhere.

2022

A Vision Transformer Encoder from Scratch

The SegFormer transformer model is a very stable and powerful computer vision model. In this article I take a deep dive into the inner workings of the model’s encoder in an attempt to understand why it performs so well. Read on to find out what makes this vision model so awesome.

2021

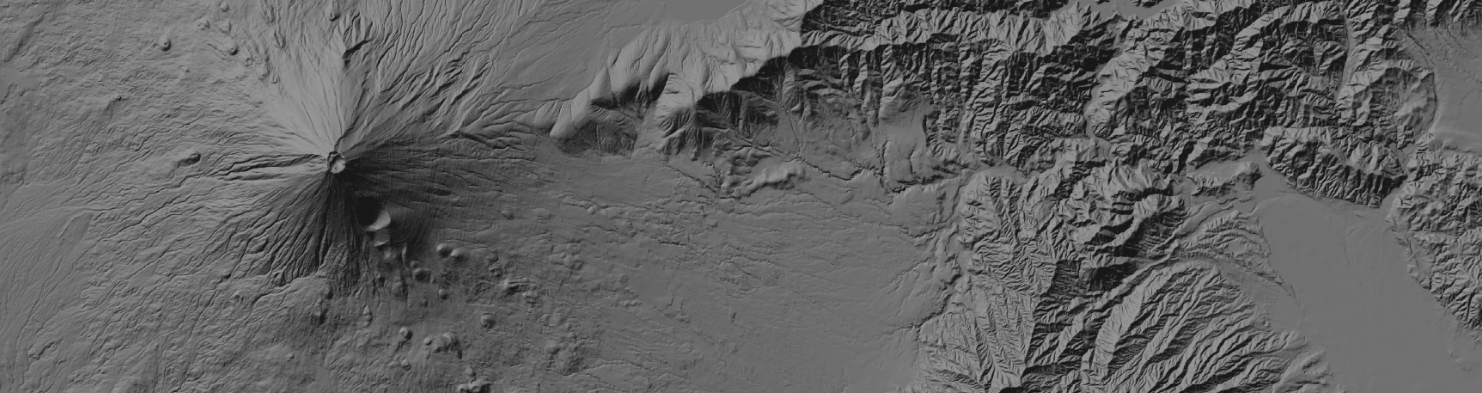

Processing DEM Data for Japan

Japan’s Digital Elevation Model

The Geospatial Information Authority of Japan hosts one of my all time favorite datasets: digital elevation model (DEM) of Japan. This is such a cool dataset and there is a really nice visualization technique I’ve found that allows seamless visualization over the entire country of Japan.

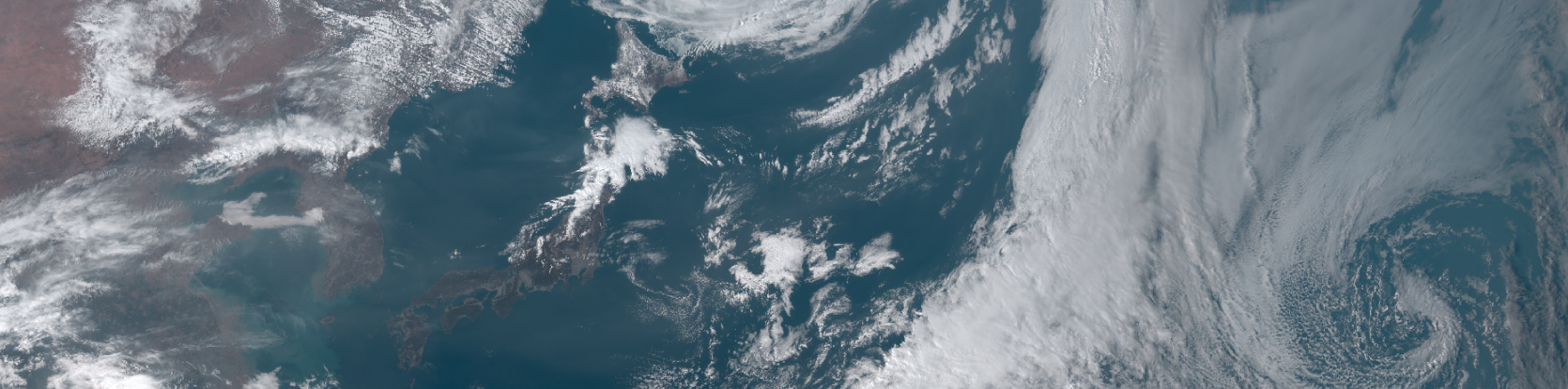

Himawari 8 Geostationary Weather Satellite

JAXA provides free, limited use access to top of atmosphere reflectance and other derived data from the Himawari 8 geostationay satellite. You can request access to the data here. I’ve put together a helper script that downloads the data files from JAXA’s FTP server and extracts one of the bands (cloud top height) as a GeoTIFF. The script can be modified to include your own account ID and password along with the target date for which the script will download hourly data from the JAXA server.